AI models are a widespread phenomenon but a new large-scale study proves that it fails at providing transparency.

The latest article published in this regard mentioned how the matter is serious because policymakers need to be guided if they wish to combat the issues linked to poor regulation.

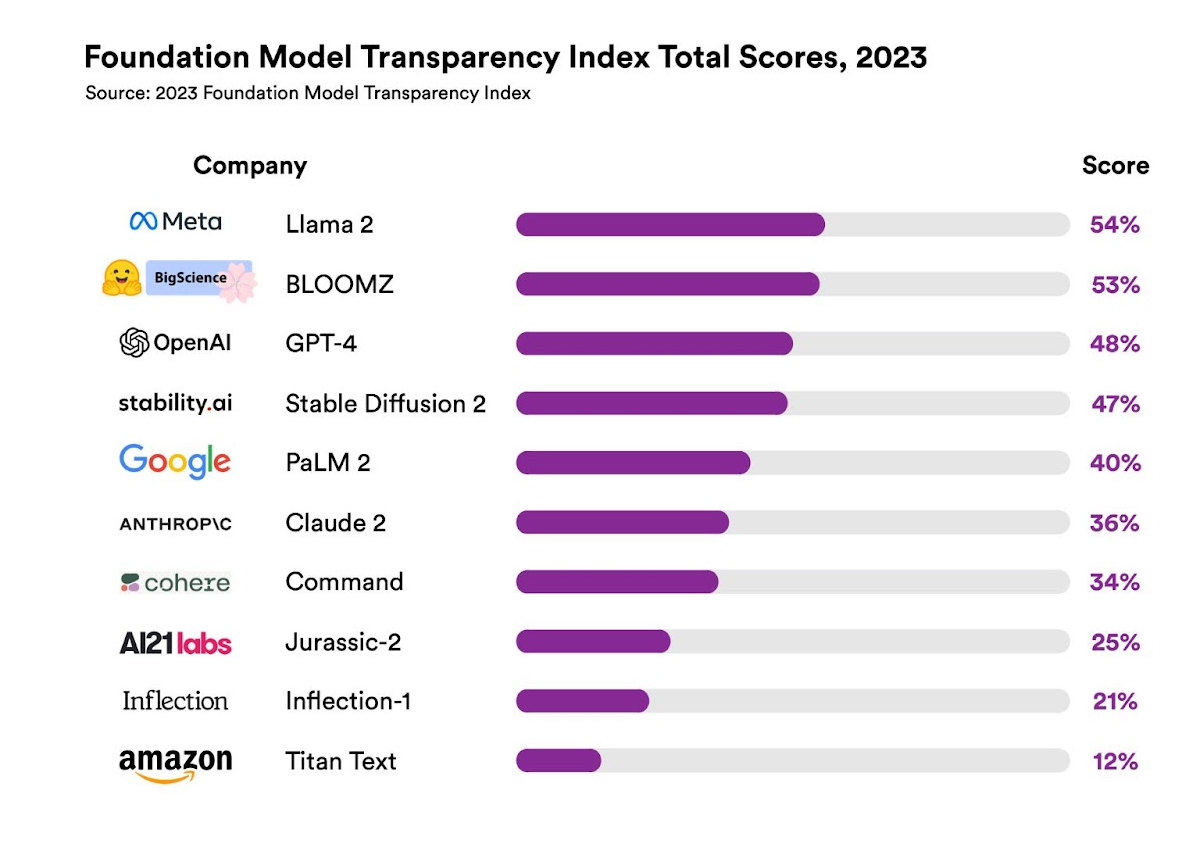

As per researchers from Stanford University, a new index called Foundation Model Transparency ranked the top 10 leading AI firms in this regard and it was interesting to see the overall results.

As far as who came out on top is concerned, it was Llama 2 that managed to attain 54%. The latter is a product of Meta and was rolled out in the month of July.

Moreover, GPT-4 which is a product of OpenAI came in third with a score of 48%. Meanwhile, it was Google’s PaLM 2 that stood fifth at just 40%, just a little above Anthropic who scored 36%.

One researcher who goes by the name of Rishi Bommasani explained how the firms needed to strive to attain a score that lay in the range of 80 to 100%.

As per the report, the authors mentioned how less transparency just makes things so much harder. In this manner, policymakers struggle with having the right policies in place to regulate such a powerful technology.

In the same way, it just makes things so much harder for firms to understand if this type of technology can be relied upon for apps or study purposes or even to understand the drawbacks that such AI models possess.

Remember, a lack of transparency means regulators will struggle in terms of putting forward the right queries, and forget about taking appropriate action in this area.

We all know how the arrival of AI has come with a bang and the hype is certainly real. But that can be overshadowed thanks to the leading issue of no transparency. After all, regulators will struggle in terms of putting forward the right queries and will hence even struggle with taking command over such areas too.

Today, the arrival of AI has produced a huge eyebrow-raising affair in terms of what to expect and how the technology can impact all members of society.

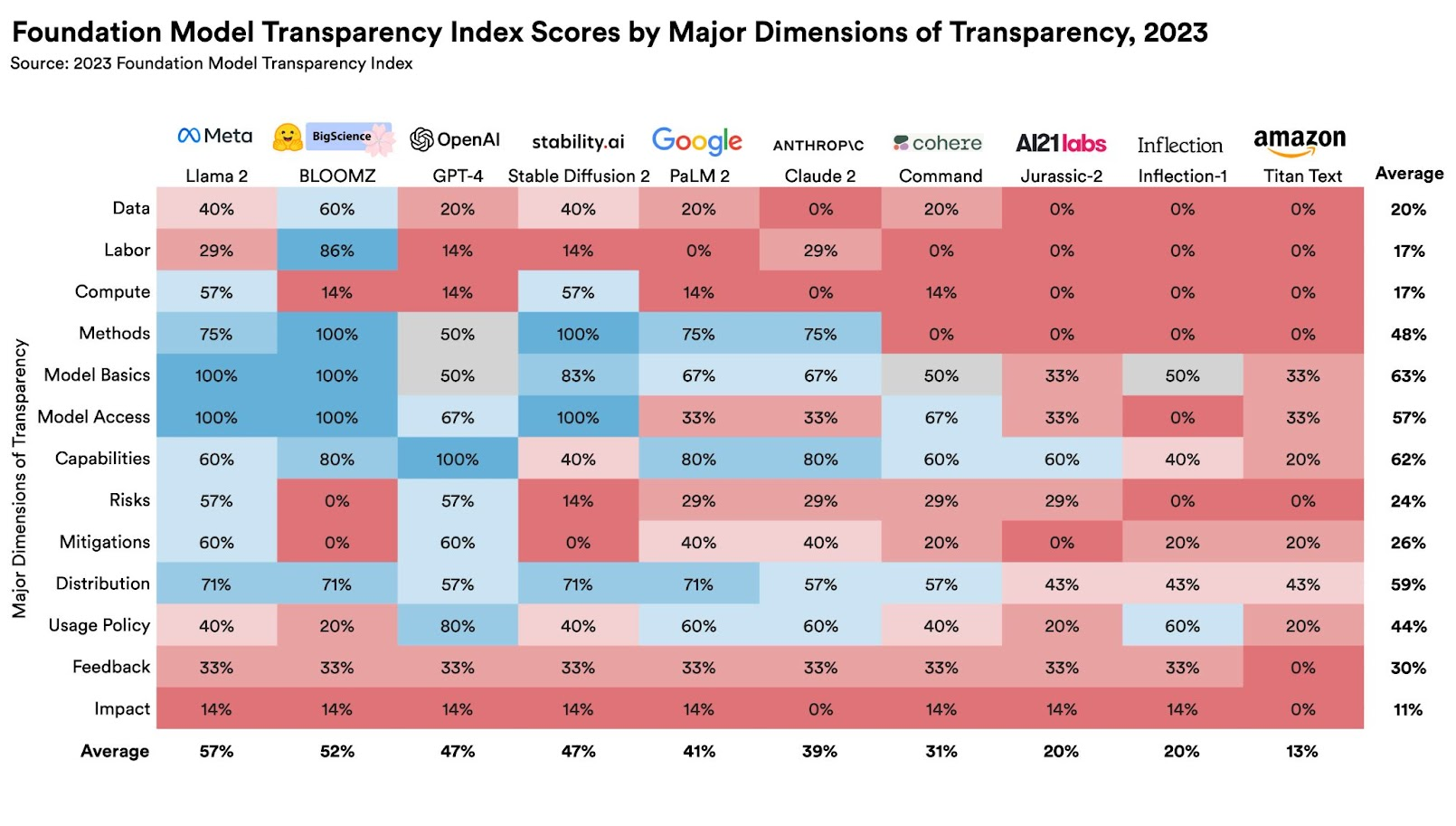

Moreover, this particular Stanford Study also shed light on how no firm is giving out data about the number of users that rely on the AI model or across any geographical location where it might be used.

The majority of AI firms fail to disclose the amount of copyrighted data that are in use for such models as confirmed by researchers recently.

Remember, transparency is a major consideration when generating policies and the majority of those heading this domain in the EU realize that. The same goes for regions like Canada, the US, China, and the United Kingdom.

But definitely, the EU is moving forward, full throttle, in terms of AI regulation and hopes to get the right laws in place by this year’s end.

Read next: AI Might Put People’s Job Security At Risk But More Positions Are Being Created To Review AI Models And Their Inputs

The latest article published in this regard mentioned how the matter is serious because policymakers need to be guided if they wish to combat the issues linked to poor regulation.

As per researchers from Stanford University, a new index called Foundation Model Transparency ranked the top 10 leading AI firms in this regard and it was interesting to see the overall results.

As far as who came out on top is concerned, it was Llama 2 that managed to attain 54%. The latter is a product of Meta and was rolled out in the month of July.

Moreover, GPT-4 which is a product of OpenAI came in third with a score of 48%. Meanwhile, it was Google’s PaLM 2 that stood fifth at just 40%, just a little above Anthropic who scored 36%.

One researcher who goes by the name of Rishi Bommasani explained how the firms needed to strive to attain a score that lay in the range of 80 to 100%.

As per the report, the authors mentioned how less transparency just makes things so much harder. In this manner, policymakers struggle with having the right policies in place to regulate such a powerful technology.

In the same way, it just makes things so much harder for firms to understand if this type of technology can be relied upon for apps or study purposes or even to understand the drawbacks that such AI models possess.

Remember, a lack of transparency means regulators will struggle in terms of putting forward the right queries, and forget about taking appropriate action in this area.

We all know how the arrival of AI has come with a bang and the hype is certainly real. But that can be overshadowed thanks to the leading issue of no transparency. After all, regulators will struggle in terms of putting forward the right queries and will hence even struggle with taking command over such areas too.

Today, the arrival of AI has produced a huge eyebrow-raising affair in terms of what to expect and how the technology can impact all members of society.

Moreover, this particular Stanford Study also shed light on how no firm is giving out data about the number of users that rely on the AI model or across any geographical location where it might be used.

The majority of AI firms fail to disclose the amount of copyrighted data that are in use for such models as confirmed by researchers recently.

Remember, transparency is a major consideration when generating policies and the majority of those heading this domain in the EU realize that. The same goes for regions like Canada, the US, China, and the United Kingdom.

But definitely, the EU is moving forward, full throttle, in terms of AI regulation and hopes to get the right laws in place by this year’s end.

Read next: AI Might Put People’s Job Security At Risk But More Positions Are Being Created To Review AI Models And Their Inputs